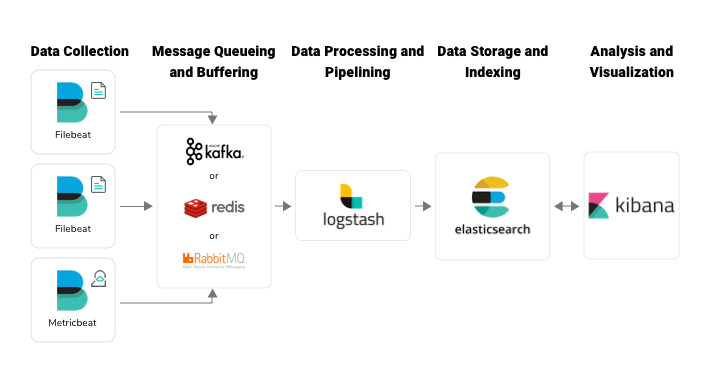

use ElasticSearch with logstash or filebeat to send web server logs. I have gone through above link but the index is not working with the same. For example, organizations often use ElasticSearch with logstash or filebeat to send web server logs, Windows events, Linux syslogs, and other data there. Just like other libraries, elasticsearch-hadoop needs to be available in Sparks.

If you try to set a type on an event that already has one (for example when you send an event from a shipper to an indexer) then a new input will not override the existing type. status: stable role: sidecar role: aggregator delivery: best effort acknowledgements: yes egress: stream. The type is stored as part of the event itself, so you can also use the type to search for it in Kibana. Filebeats config: filebeat.inputs: - type: log enabled: true paths: - D:\\Logs\\UIS\\CMS\\ fields: logtype: cmslog fieldsunderroot: true - type: log enabled: true paths: - D:\\Logs\\UIS\\MonitoringService\\ fields: logtype. Types are used mainly for filter activation. Filebeat: Filebeat is a log data shipper for local files.Filebeat agent will be installed on the server. I am trying to configure logstasth to gather data from filebeat and put it in different indices depending from sources' filenames. I am putting logstash config below, any help will greatly appreciated… In VM 1 and 2, I have installed Web server and filebeat and In VM 3 logstash was installed. The logstash input will be just running on single port example 5043 and the filter remains same for all env, Is there way we can configure only the output sections and route the logs based on hosts ? or any other way ?. All dev logs should go to dev index, all sys logs should to sys index and uat logs to uat index. I am looking for some help in creating a muliple indexes in ES with just single logstash config file. No filter unless you want to manipulate the data. Most likely you will want a UDP or TCP input if you are listening to a port. You need to create a pipeline configuration using this structure. This is then forwarded onto Logstash for further processing, which is where each element. So create a file called nf in /etc/logstash/conf.d/ with the below suggestions. Basically I have several different log files I want to monitor, and then I actually want to put an extra field in to identify which log the entry came from, as well as a few other little things. Create multiple indexes with same logstash config file Logstash I am trying to figure out how to deal with different types of log files using Filebeats as the the forwarder.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed